Found this helpful? Share it with peers.

Introduction

The current conversation around Agentic AI Process Automation sits at an uncomfortable intersection of ambition and reality.

On one side, enterprises are promised autonomous systems capable of planning, deciding, and executing complex work across systems. On the other, analysts expect that more than 40% of today’s agentic AI projects may be canceled by 2027 due to cost, scaling complexity, or unmanaged risk. This tension matters because agentic AI represents a structural shift in Business Process Management.

Traditional process automation follows predefined paths. It executes what was designed – no more, no less. Agentic systems operate differently: they pursue goals, interpret context, and determine how to reach an outcome. The shift from rule-driven execution to goal-driven reasoning creates enormous opportunity – but also structural risk.

The question is not whether it will enter the enterprise. The question is whether organizations can embed it into controlled process architectures that allow autonomy without sacrificing oversight.

Why Agentic AI Is Challenging Traditional Process Automation

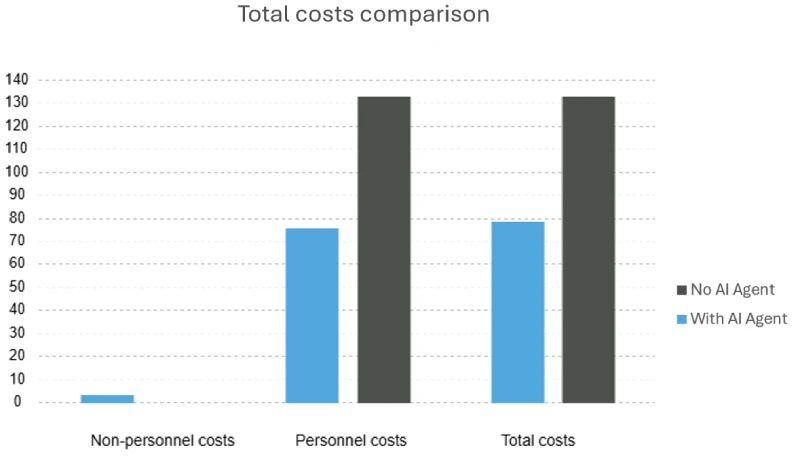

A common misconception is to treat ‘agentic AI’ as a single capability. In reality, it builds on Large Language Models (LLMs), a subset of Generative AI. LLMs can interpret language, generate content, and support reasoning. But on their own, they are passive – they respond to prompts, they do not act independently.

In recent years, organizations focused on making LLMs more useful inside the business: granting access to operational knowledge, improving contextual understanding, and enabling them to ‘speak the language of the business‘. Models were trained or configured to understand internal terminology, processes, and documentation so they could assist employees more effectively. Now the ambition is shifting.

We are no longer asking AI to assist. We are defining objectives, assigning responsibilities, and specifying what actions are allowed. In other words, we are turning language models into agents that can pursue goals within enterprise workflows.

Conversational AI Agent

From Language Model to Autonomous Agent

To become an AI agent, an LLM must be extended with additional capabilities:

Perception and goal setting – accessing and extracting relevant information from the environment to set a goal

Reasoning and planning – breaking complex objectives into structured steps

Autonomy and action – selecting tools and executing actions without constant human intervention

Once triggered, an agent can determine what to do next and which systems or tools to call – for example, retrieving leads from a CRM system or initiating a compliance check. This shift transforms language models from assistants into goal-driven actors within enterprise workflows.

The promise is compelling: AI-driven digital workers that operate continuously, understand business objectives, access enterprise systems, and execute work with minimal supervision. But greater autonomy introduces greater responsibility.

The more decision-making authority is delegated to AI agents, the more clarity, traceability, and accountability must be built into their operating environment. Without defined process boundaries, agent behavior can become opaque – a “black box” making decisions that are difficult to explain, audit, or align with intended process flows.

And that is precisely why agentic AI challenges traditional process automation.

What Is Agentic AI Process Automation?

At its core, Agentic AI Process Automation means embedding autonomous AI agents into clearly defined business processes. It combines goal-driven behavior with explicitly defined boundaries.

Traditional automation executes only what has been explicitly designed. Every step, rule, and decision branch must be specified in advance for the system to act.

Agentic systems, by contrast, work toward a defined objective. They can interpret context, select tools, and determine the sequence of actions needed to achieve the goal – within approved limits. Autonomy, however, does not imply the absence of control.

For agentic automation to function in enterprise environments, key elements must be established upfront: the process objective, the tasks required to achieve it, the data sources an agent may access, the actions it is permitted to take, and when human approval or escalation is required.

Without boundaries, autonomy creates uncertainty. With deliberate process design, it scales.

How Agentic AI Changes Process Automation

Introducing AI agents into workflows does not eliminate process design, but it changes what process design must account for.

Traditionally, processes were described either for human execution, where experience fills gaps, or for static rule engines, where every condition must be explicitly scripted. Agentic automation changes the nature of execution – introducing autonomous reasoning within defined boundaries.

The structure remains explicit. Objectives, constraints, escalation paths, and available tools are still defined in the process model. But decision logic inside tasks is no longer fully hard-coded. Instead of executing a fixed rule tree, the agent interprets context, reasons over relevant data, and determines how to achieve the objective within defined limits.

The process still defines what is allowed. The agent determines how to proceed within that space.

This creates controlled flexibility. Execution can adapt to situational context without abandoning governance. Human roles shift accordingly: from supervising every operational step to overseeing exceptions, approvals, and performance.

At the same time, visibility becomes more critical. As reasoning becomes situational, decisions must remain transparent and traceable.

Execution becomes more adaptable, but also more demanding to govern.

The Risks of Uncontrolled Agentic Automation

Without defined boundaries, adaptability quickly turns into exposure. When GenAI agents operate without explicit process governance, structural risks emerge – not because the technology lacks intelligence, but because autonomy lacks containment.

Inconsistent Outcomes

Agents may interpret the same information in different ways, use different tools for the same task, or rely on incomplete or incorrect data sources. This can lead to inconsistent or incorrect results across similar cases.

Unrestricted Autonomy

When pursuing a defined objective, agents may overact by selecting unnecessary tools, exploring unintended process paths, or taking actions simply because they are technically possible.

Unclear Accountability

Agents may make confident decisions based on weak assumptions, outdated information, or hallucinations caused by excessive context. As execution becomes automated, tracing accountability becomes more complex.

Irresponsible Data Usage

Agents may rely on incorrect data sources, fail to recognize data sensitivity when combining information, or hallucinate when exposed to excessive or unstructured context.

Escalating Operational Risk

Because agents operate continuously and at machine speed, incorrect interpretations or tool decisions can quickly spread across interconnected systems. Without timely detection and intervention, small errors can escalate into broader operational disruptions.

These challenges explain why many GenAI initiatives struggle when moving from controlled pilots to enterprise-scale deployment. The limiting factor is rarely the intelligence itself – it is the absence of governance around it. Success depends on embedding autonomy within both the organization’s process architecture and its broader enterprise architecture, not treating AI as a standalone capability.

Why Process Governance Becomes Critical

Autonomy alone is not enough. It must be embedded within well-defined process governance.

Reliable outcomes

Clear goals, defined task logic, and explicit success criteria guide agent behavior toward intended business results.

Safe autonomy

Process models define where independent action is permitted and where human escalation is required.

Accountable decisions

Role ownership and approval checkpoints preserve responsibility even when execution is automated.

Responsible data usage

Governance policies determine which data sources may be accessed and how information can be combined within a workflow.

Operational resilience

Monitoring and analytics make adaptive behavior observable, allowing deviations to be detected and corrected early.

Lifecycle-driven evolution

Embedding agents within the broader Process Management Lifecycle – from design and analysis to execution, monitoring, and continuous optimization, ensures autonomy remains aligned with business intent over time.

In practice, platforms such as ADONIS combine AI-assisted modeling and analysis with transparent lifecycle governance – creating the foundation for enterprise-ready agentic automation.

How a Business Process in 2026 Could Look

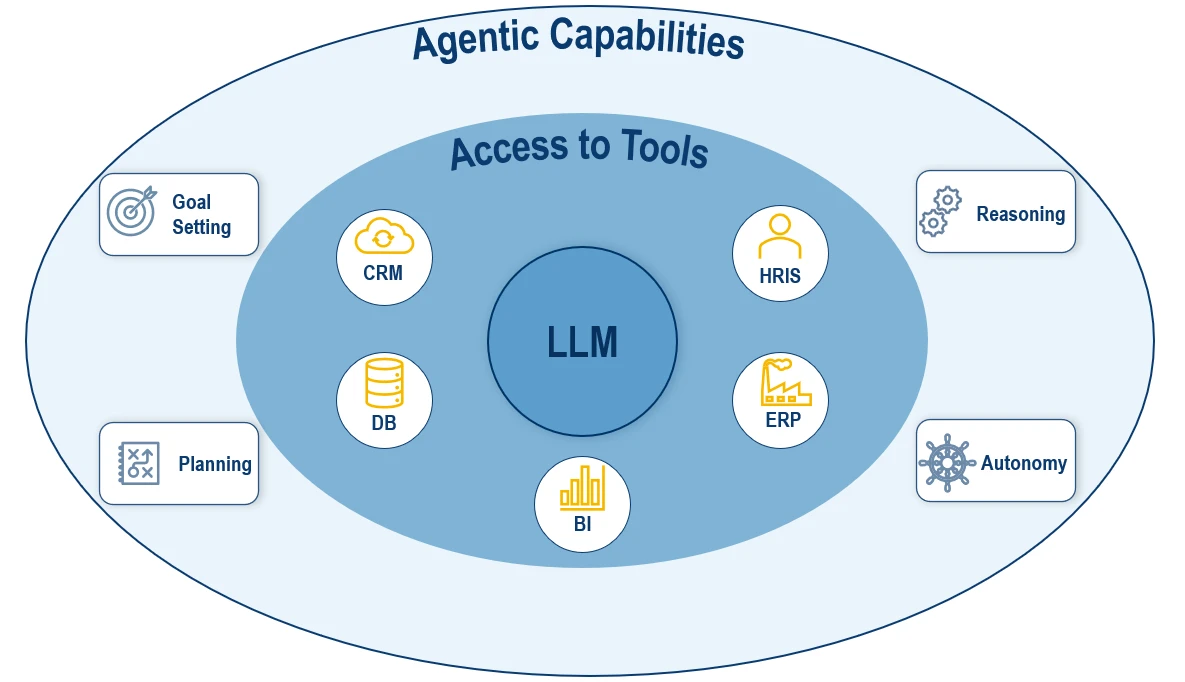

To illustrate the practical impact of Agentic AI Process Automation, consider a background check process in a regulated industry such as financial services.

Three roles are involved:

- a Sales Officer collects documentation

- a Compliance Analyst conducts the analysis and prepares a risk summary

- and a Senior Compliance Officer makes the final decision

ADONIS Business Process Diagram: Customer Background Check

The nature of the Compliance Analyst’s work makes it particularly suitable for structured agentic automation. These activities include:

- Validate and summarize information

- Perform background data sourcing

- Summarize data and prepare risk assessment recommendation

- Complete and store background check documentation

All of these steps support the final decision rather than determine it. Final accountability remains with the Senior Compliance Officer. When modeled and simulated using ADONIS Process Simulation, the impact becomes quantifiable rather than hypothetical.

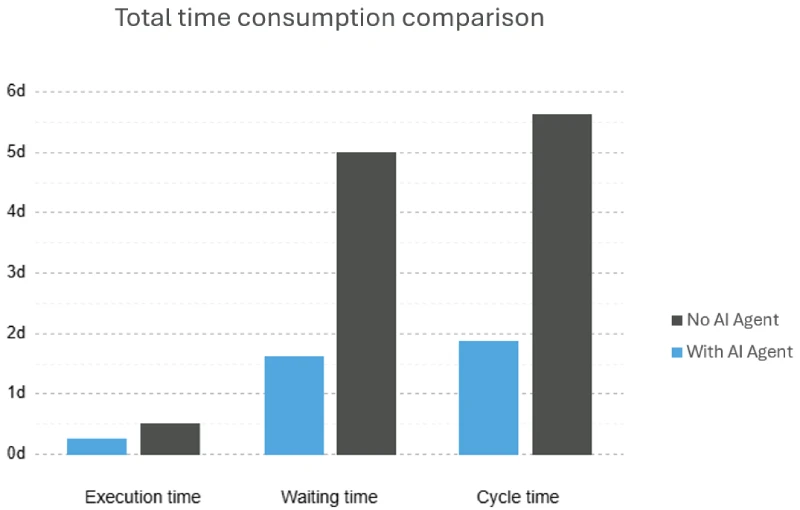

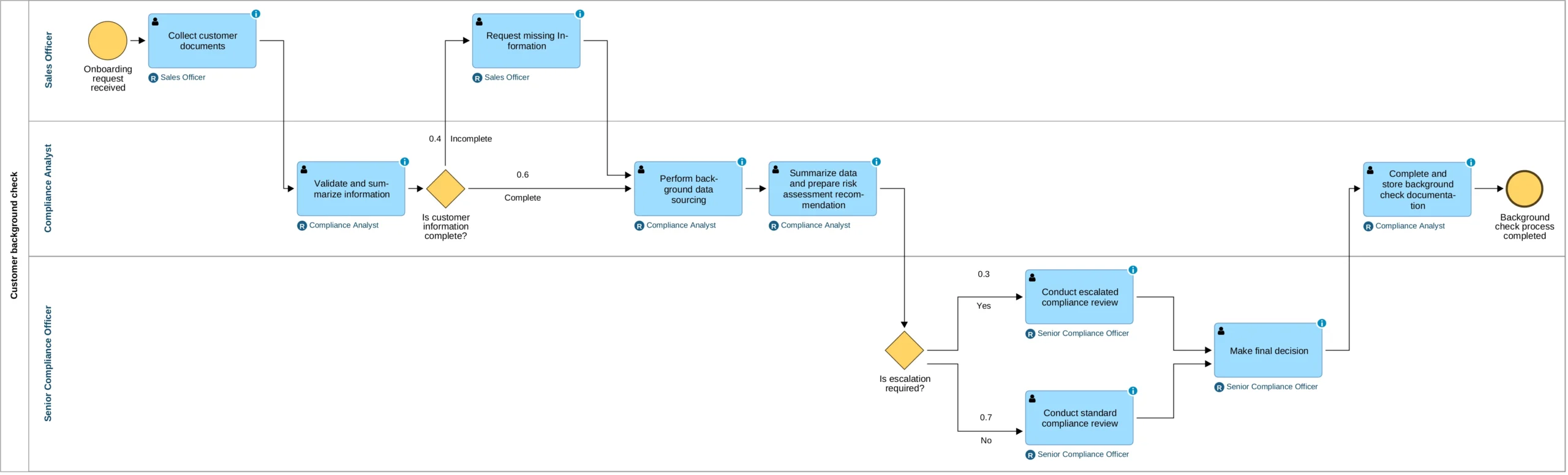

The simulation compares the traditional setup with a scenario in which an AI agent performs the analyst’s tasks.

Total work time and cost consumption

The results show measurable improvements:

-

Execution time reduced by 55%

-

Waiting time reduced by 80%

-

Personnel cost reduced by 43%

Cycle time decreases because preparation work no longer depends on manual availability. Cost savings come from reallocating human effort toward higher-value oversight and decision-making, but the impact goes beyond cost reduction.

By shifting repetitive preparation work to AI agents, organizations increase operational capacity without proportionally increasing headcount. This enables scalable growth, improved margins, and faster response times under rising demand. Most importantly, responsibility for the final decision remains unchanged.

This example illustrates what Agentic AI Process Automation looks like when embedded within structured process governance: GenAI operating inside defined boundaries, with measurable impact and preserved accountability.

This is not unbounded automation. It is structured, simulated, and disciplined augmentation.

Is Agentic AI Process Automation the Future?

Agentic AI is already entering enterprise workflows. The real question is not whether organizations adopt it, but whether their workflows are structured around BPM principles that enable scalable, governed automation.

Where processes are clearly modeled, boundaries are defined, and accountability remains explicit, agentic automation can deliver measurable improvements in speed, efficiency, scalability, and adaptability. Where experimentation replaces structure, initiatives are likely to stall before reaching enterprise scale.

Autonomy without structure is experimentation. Autonomy within structured process architectures is transformation.

The future of enterprise automation will not belong to the most autonomous systems – it will belong to the organizations that know how to govern GenAI deliberately and embed it within controlled process architectures.